MathematiTron

A personalised AI math tutor built on Claude — per-student mastery tracking across a 111-concept curriculum graph.

Problem

A generic chat interface to an LLM is not a tutor. Raw Claude can explain any concept, but it has no memory of what a student has mastered, no structural model of prerequisites, and no way to pace toward a specific goal. Students leaning on ChatGPT to learn end up with fragmented understanding and nothing tracking their progress.

Approach

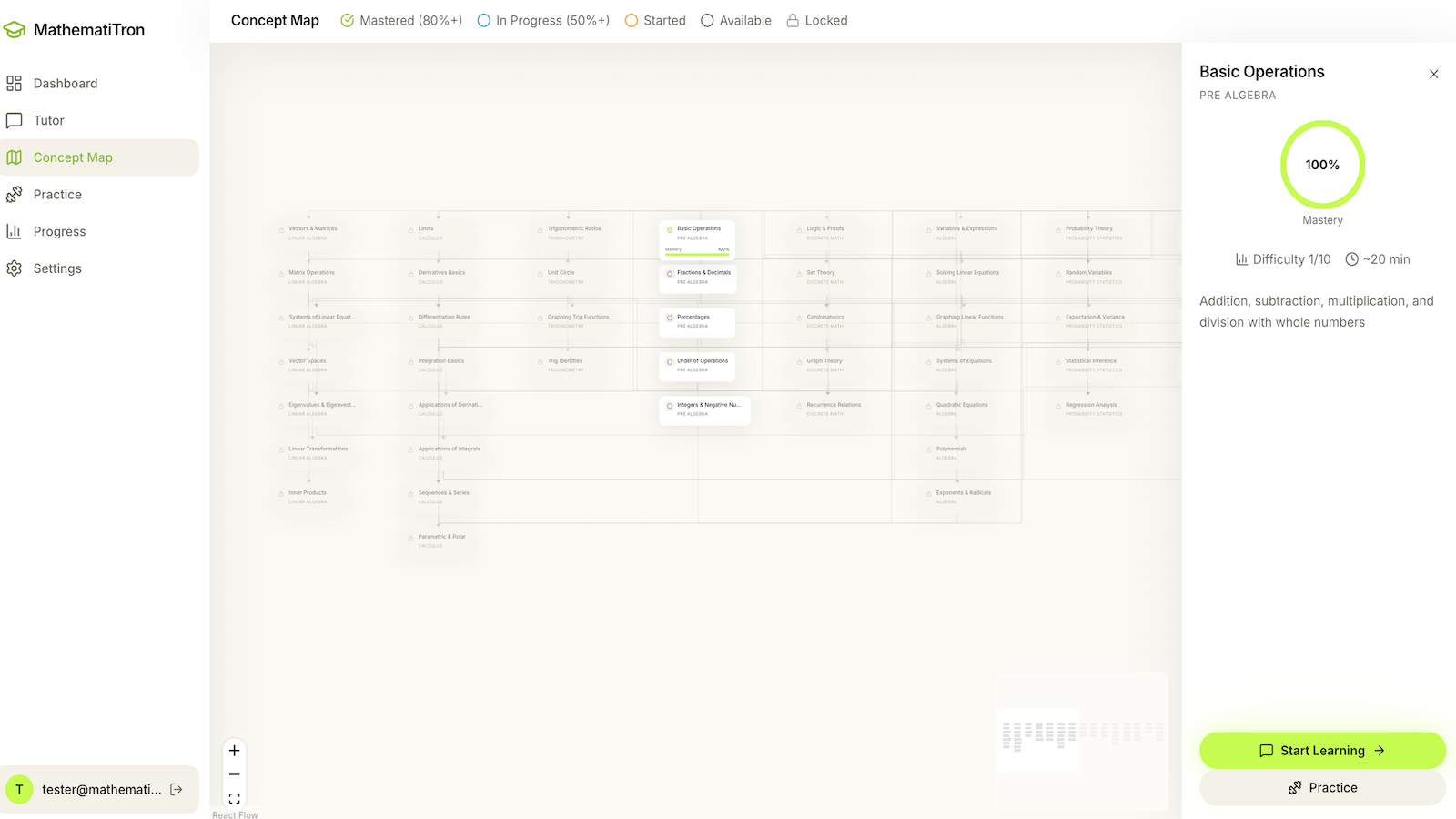

A React 19 + Express 5 stack with Claude Sonnet as the tutoring engine. A curriculum graph encodes 111 concepts across 15 categories — pre-algebra through topology — rendered in ReactFlow. A mastery layer scores per-concept progress against a 60% threshold and derives a personalised learning path. The tutor prompt is assembled from five layers (tutor identity, student profile, concept context, conversation history, and behavioural rules) and streams responses over Server-Sent Events. Supabase provides Postgres, auth, and row-level security; math renders in KaTeX with MathLive for expression input.

Stack

Synopsis

MathematiTron is scoped around the full tutoring loop — a curriculum graph, a mastery model, and a tutor service that wraps Claude with per-student context. The thesis is that an LLM should be a tutor constrained by structure, memory, and pedagogy, rather than a generic chat: the Anthropic SDK is a dependency, not the product. The audience is students preparing for a specific goal — exam, degree prerequisite, or self-guided curriculum — who need pacing and progress tracking, not a transcript.

Gallery

Curriculum as a prerequisite graph

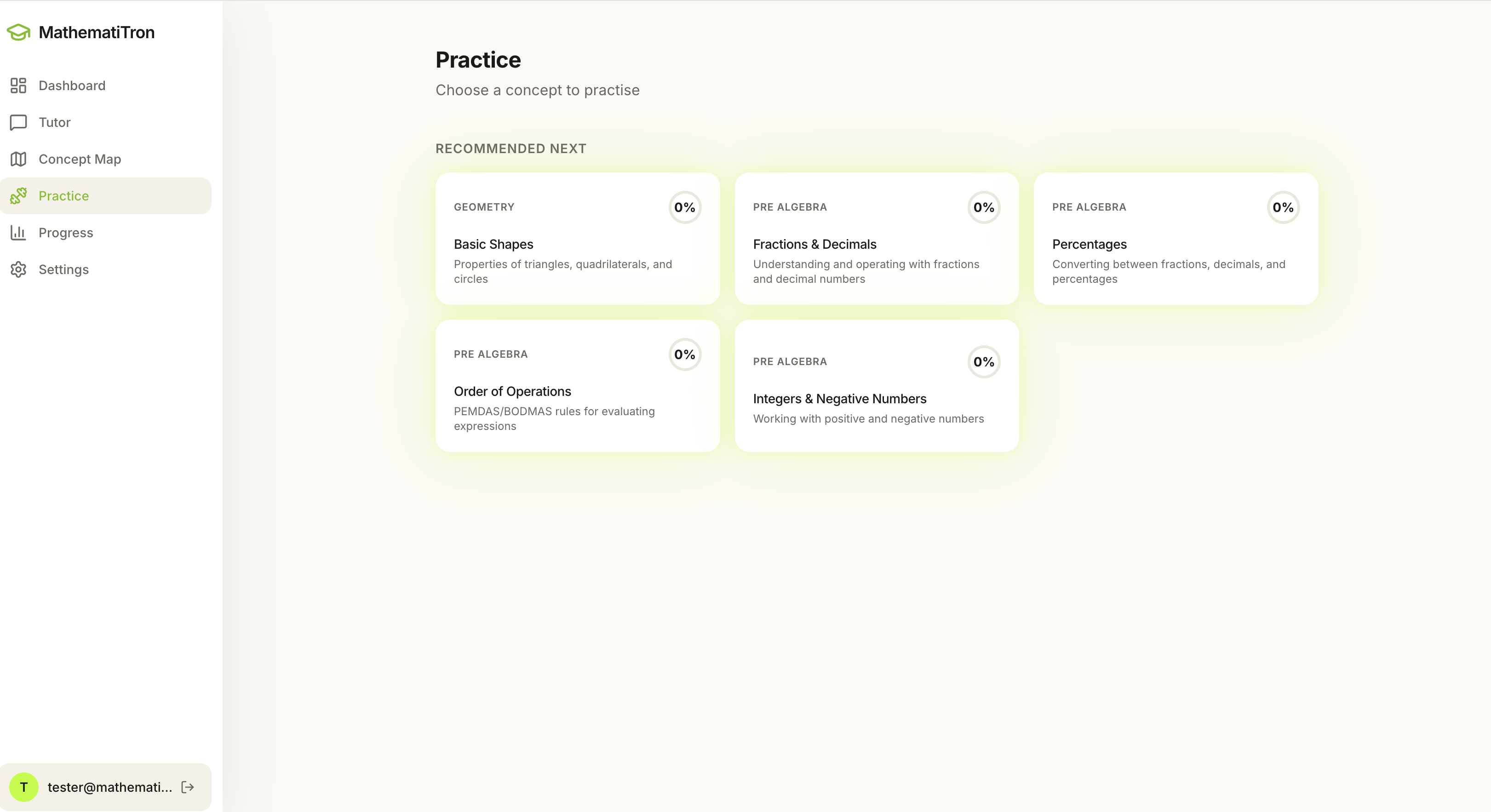

The practice surface

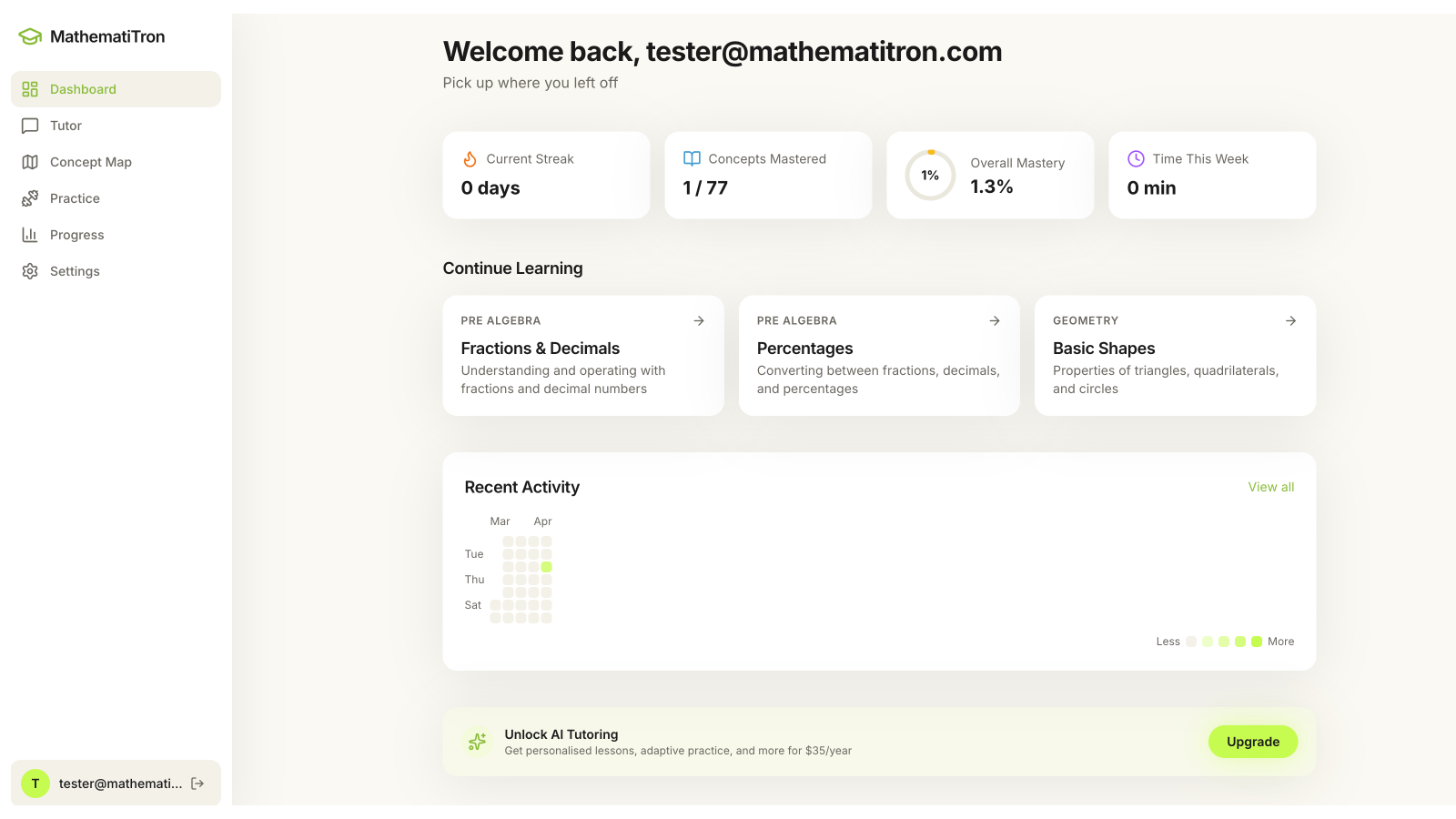

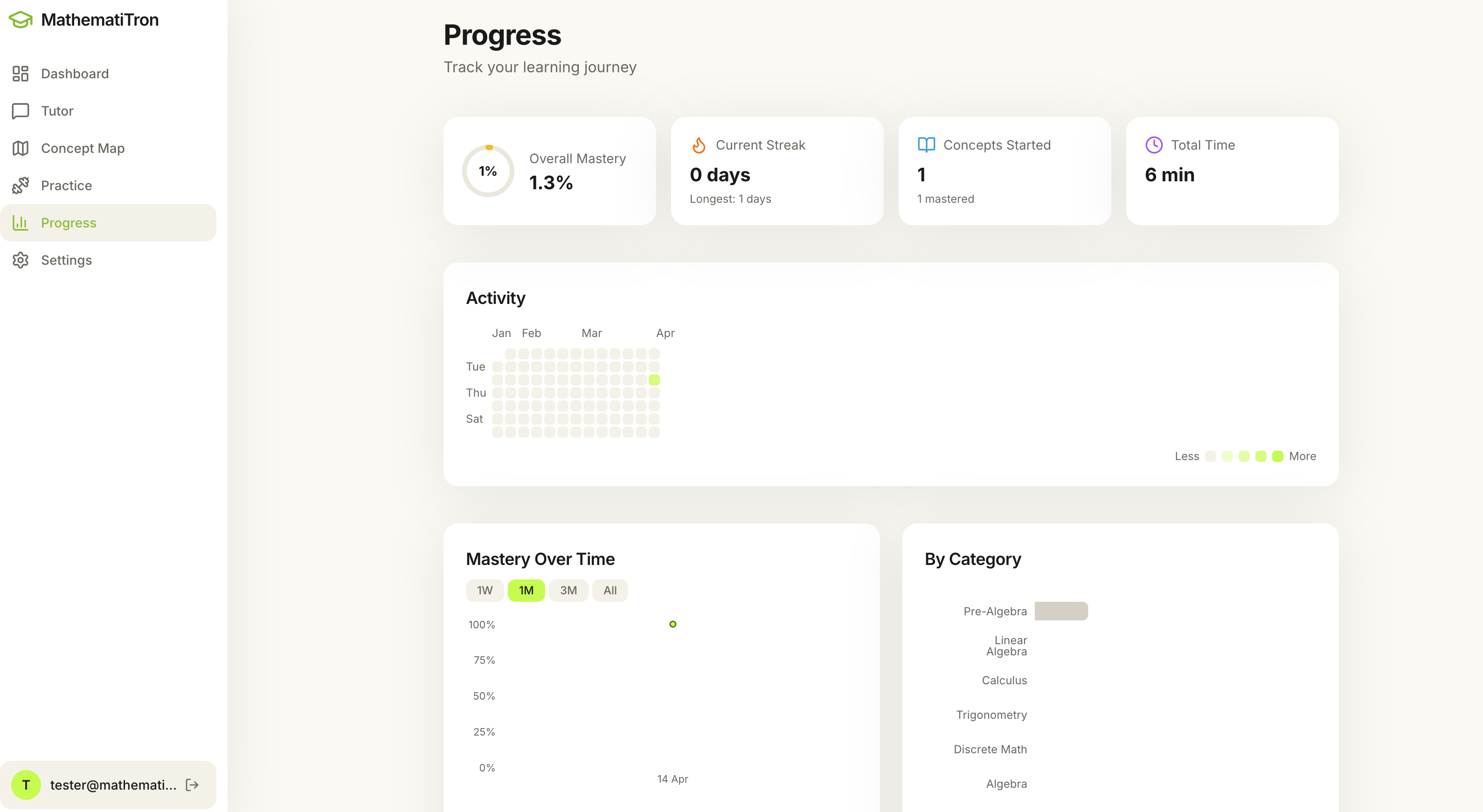

Per-student progress dashboard

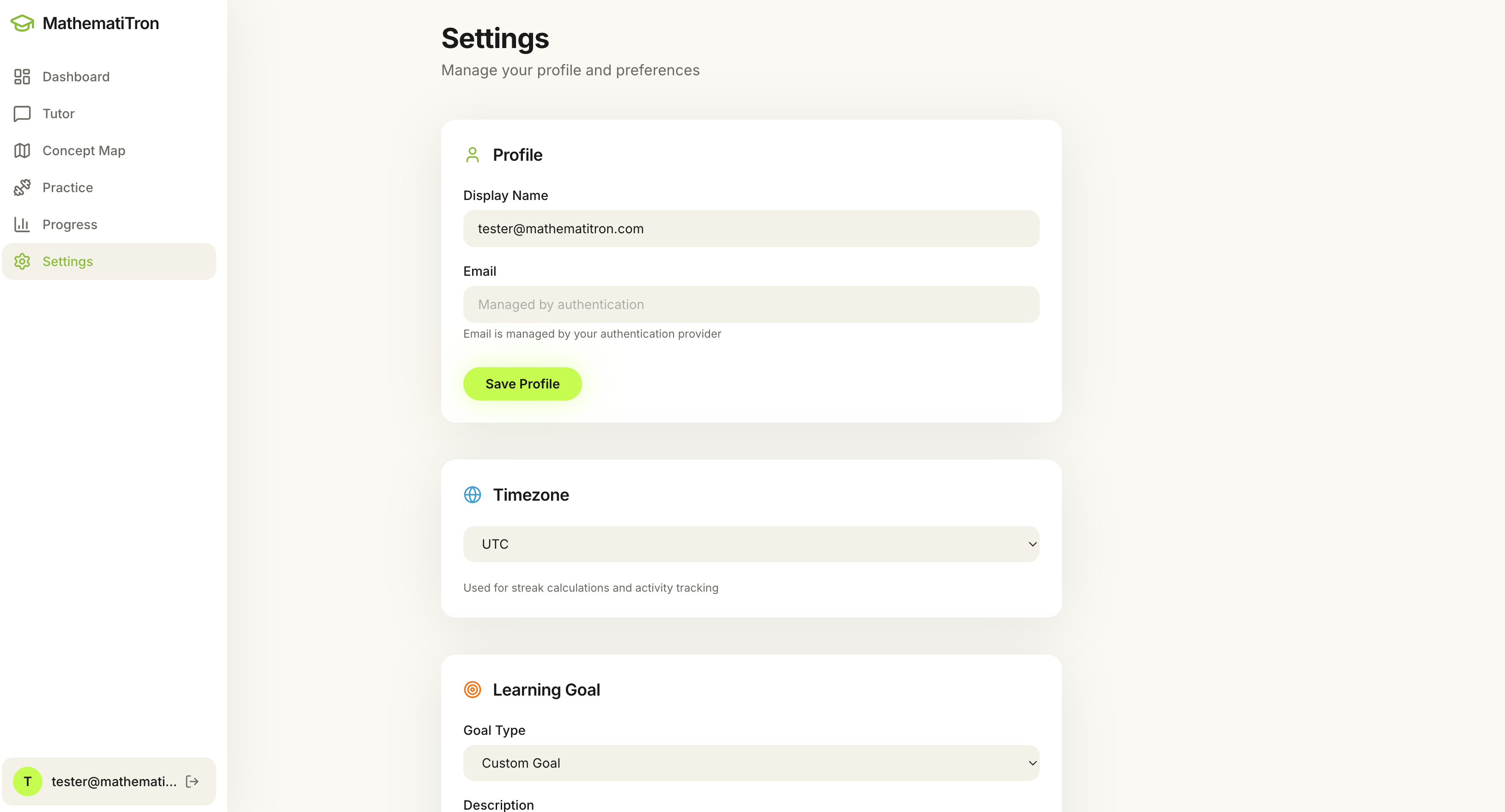

Goal configuration